Blog

How to visualize internal AI adoption without creating a feature factory

·Martin Börlin

#ai-strategy#north-star-framework#internal-ai

Most AI strategies look convincing in slides.

Far fewer survive contact with everyday work.

Tools are bought. Pilots are launched. Teams are told to experiment. A few fast wins are celebrated. But inside the organization, the hardest questions are often still unanswered.

- What do we actually want AI-assisted work to improve?

- Which workflows matter most?

- Where do we need guardrails?

- How do we avoid speeding up the wrong work?

That is where things usually start to drift. AI adoption becomes visible, but the expected business outcome remains vague.

A tool stack is not a strategy

A company can have high AI activity and still low AI maturity.

People prompt. Teams experiment. New tools show up in budgets and screenshots. Yet none of that guarantees that the company is becoming meaningfully better at operating, learning, or delivering value.

That is why I think many companies do not really have an AI strategy.

They have a list of tools and experiments.

Start with outcomes, not adoption

In this example, the focus is not customer-facing AI. The focus is internal leverage.

That means better use of time, faster learning, shorter paths from idea to validation, and less manual work in places where it actually matters.

It also means being careful about what not to optimize for.

"Number of AI users" can be an interesting signal.

"Number of prompts" can be a curiosity.

But neither tells you much about whether the business is improving.

A better question is:

What should be meaningfully better in the company if AI-assisted work is actually working?

That is where the strategy starts.

Why this needs structure

AI tends to amplify whatever system it enters.

If the company already has clarity, good prioritization, and strong product judgment, AI can increase leverage.

If the company is already fragmented, reactive, or too focused on output, AI can make those problems worse at a much higher speed.

This is one reason I think AI increases the need for clarity rather than reducing it.

It gets easier to build.

It does not automatically get easier to decide what is worth building.

That is also why faster delivery can easily turn into a new feature factory if product thinking, problem framing, and quality guardrails do not improve at the same time.

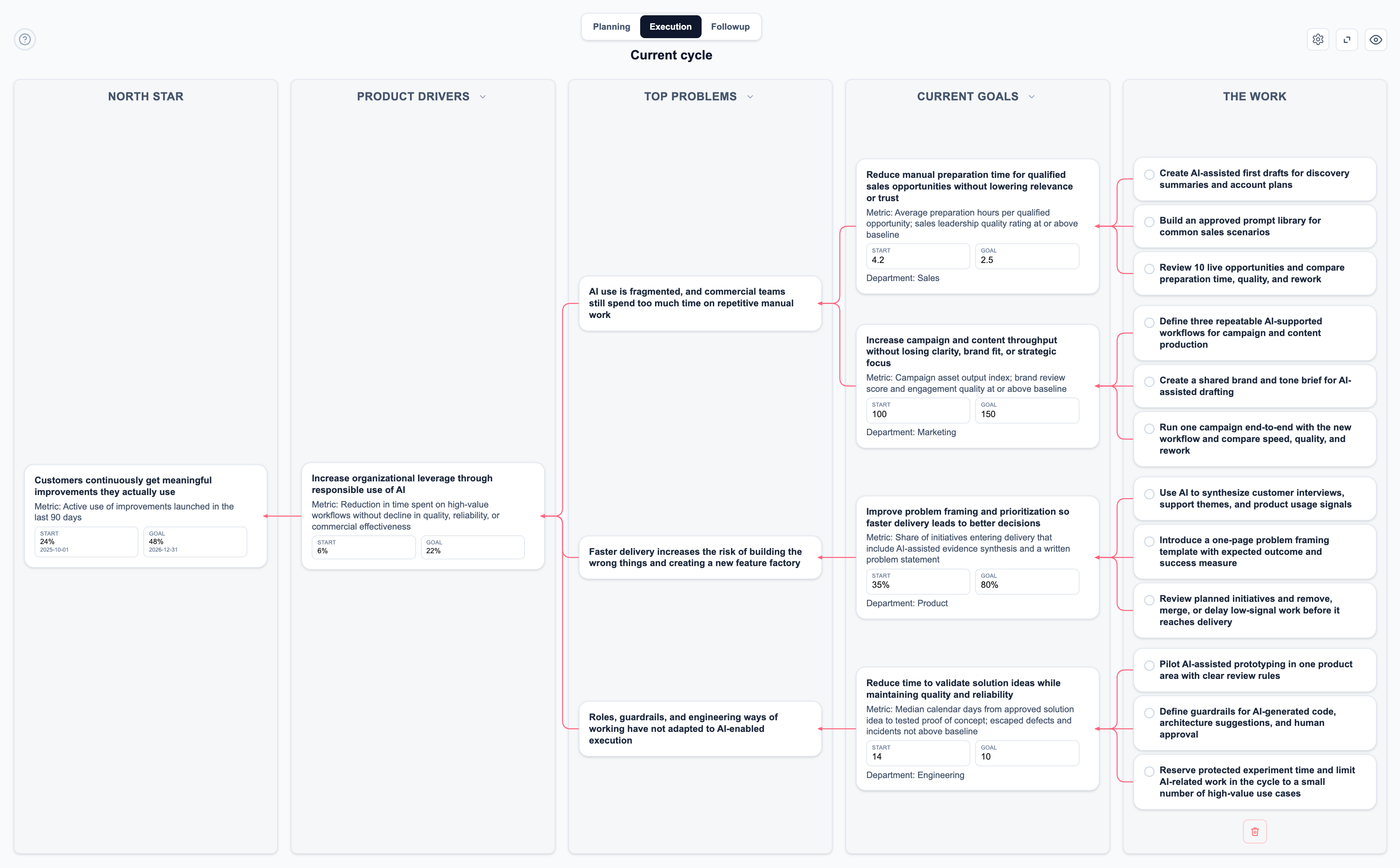

What this looks like in an NSF Board

This is exactly the kind of change that becomes easier to discuss when you visualize it.

Instead of talking about AI as a vague transformation program, you can place it inside a clear structure:

- a North Star that describes the long-term value the company wants to create

- a Product Driver that describes the internal leverage AI should create

- a small number of Top Problems that explain what is standing in the way

- Current Goals for different functions

- a limited amount of The Work for the current cycle

One important clarification: in this example, the North Star is intentionally generic. It represents the company’s long-term direction and value creation. AI adoption is not the goal in itself. It is a means to help the organization move more effectively toward that goal.

That turns "our AI strategy" from a slide into something teams can actually move inside.

One Product Driver, multiple functions

For this scenario, one Product Driver is enough:

Increase organizational leverage through responsible use of AI

Here, "responsible" matters.

It means using AI in ways that improve leverage without lowering quality, reliability, trust, judgment, or compliance. It means adding guardrails where needed, keeping humans in the loop where it matters, and avoiding the very common trap of confusing speed with progress.

Under that Product Driver, the most useful Top Problems are not "we need more AI."

They are things like:

- AI use is fragmented, and commercial teams still spend too much time on repetitive manual work

- Faster delivery increases the risk of building the wrong things and creating a new feature factory

- Roles, guardrails, and engineering ways of working have not adapted to AI-enabled execution

Those problems create a useful bridge between ambition and action.

Different functions need different Current Goals

One of the biggest mistakes in AI programs is assuming that every function should improve in the same way.

They should not.

Product, Engineering, Sales, and Marketing have different workflows, different constraints, and different ways of creating value. That should be visible in the board.

In one cycle, that might look something like this:

Product

Improve problem framing and prioritization so faster delivery leads to better decisions

The point here is not to produce more initiatives. It is to raise the quality of what enters delivery in the first place.

Engineering

Reduce time to validate solution ideas while maintaining quality and reliability

The point is not to maximize generated code. It is to learn faster without lowering the bar on judgment, architecture, and system quality.

Sales

Reduce manual preparation time for qualified sales opportunities without lowering relevance or trust

The point is not more generic AI-written outreach. It is better leverage in the parts of the sales process where preparation steals time from real customer dialogue.

Marketing

Increase campaign and content throughput without losing clarity, brand fit, or strategic focus

The point is not content volume for its own sake. It is to improve speed where repetition exists, while protecting quality and avoiding noise.

That is one of the strengths of using NSF here. Different parts of the organization can contribute to the same Product Driver without pretending they are solving the same problem in the same way.

Keep the cycle small enough to learn

Another trap is trying to do too much at once.

The show must go on. Product development, commercial work, maintenance, and operations do not pause because the company has decided to "go big on AI."

That means AI-related change has to be introduced at a pace the organization can absorb.

In practice, this usually means:

- a small number of Current Goals per cycle

- a limited amount of The Work under each goal

- protected room for experimentation

- enough slack to learn before scaling what seems promising

Too many AI initiatives in one cycle usually create noise rather than progress.

A smaller number of focused experiments tends to produce better learning and more credible follow-through.

The Work should reflect that reality

This is also why The Work matters so much.

For each Current Goal, I would keep it to two or three meaningful work items.

For example:

For Product:

- synthesize customer interviews, support themes, and usage signals with AI support

- introduce a lightweight problem-framing template before work enters delivery

- review planned initiatives and remove low-signal work

For Engineering:

- pilot AI-assisted prototyping in one product area

- define clear guardrails for AI-generated code and human approval

- reserve protected experiment time during the cycle

For Sales:

- create AI-assisted first drafts for discovery summaries and account plans

- build an approved prompt library for common sales situations

- compare time saved, quality, and rework across real opportunities

For Marketing:

- define a few repeatable AI-supported workflows for campaign production

- create a shared brand and tone brief for AI-assisted drafting

- run one campaign end-to-end and compare speed, quality, and rework

That is enough to create movement without pretending the whole organization can reinvent itself in one cycle.

The engineering role is already changing

This deserves its own point.

One of the most interesting discussions in AI-enabled organizations right now is what happens to role expectations, especially in engineering.

I do not think this means engineers become less important. Quite the opposite.

But I do think many role descriptions are already becoming outdated.

In some contexts, phrases like "should code" or "writes production code" may become less central than:

- strong system understanding

- product thinking

- architectural judgment

- ability to validate and refine AI-generated output

- ability to understand risk, quality, and trade-offs

The value shifts upward from pure production to direction, judgment, and systems thinking.

That change is not limited to engineering, by the way. Product, Sales, and Marketing are all moving in the same direction. Less time goes into first-draft production. More value comes from deciding, shaping, reviewing, and learning.

Faster delivery only helps if we build the right things

This may be the most important part of the whole discussion.

AI can compress the path from idea to prototype.

That is powerful.

But it also means weak prioritization becomes more dangerous. If teams can produce twice as much, but still work on the wrong things, the company does not get better. It just gets lost faster.

This is where an NSF Board becomes especially useful.

It forces the organization to ask:

- What outcome are we actually after?

- What problem are we trying to solve?

- What are we learning this cycle?

- How does this work connect to the larger direction?

Those are the questions that keep AI from turning into a new feature factory.

Final thought

A useful AI strategy is not a list of tools.

It is a clear view of what should improve, where leverage should come from, how different functions contribute, and what guardrails are needed to keep speed from eroding quality.

That is also why I think AI strategy should be visualized.

When you can see the North Star, the Product Driver, the Top Problems, the Current Goals, and The Work in one place, the conversation changes.

It becomes easier to discuss not just what the organization is doing with AI, but whether it is actually becoming better because of it.

That is the point.

And it is exactly the kind of conversation the NSF Board is built to support.